You can download the R script here .

Some useful links for working with Stan:

Linear Regression

In this part, we are going to fit a simple linear regression model through Stan. Here you can download the .stan model.

First of all, let’s compile the model and save it into an R object.

<- rstan:: stan_model (file= "data/LM_Model.stan" )

S4 class stanmodel 'anon_model' coded as follows:

data{

int<lower = 0> n; // number of obs

int<lower = 0> K; // number of covariates (including the intercept)

vector[n] y; // response

matrix[n, K] X; // covariates

real a0;

real b0;

vector[K] beta0;

vector[K] s2_0;

}

parameters{

real<lower = 0> sigma2;

vector[K] beta;

}

transformed parameters {

vector[n] mu;

mu = X * beta;

}

model{

// Prior:

sigma2 ~ inv_gamma(a0, b0);

for(k in 1:K){

beta[k] ~ normal(beta0[k], sqrt(s2_0[k]));

}

// Likelihood:

y ~ normal(mu, sqrt(sigma2));

}

generated quantities{

vector[n] log_lik;

for (j in 1:n){

log_lik[j] = normal_lpdf(y[j] | mu[j], sqrt(sigma2));

}

}

We consider the Prestige data from the car package. Statistical units are occupations, for which we have:

education: average education of occupational incumbents, years, in 1971;

income: average income of incumbents, dollars, in 1971;

women: percentage of incumbents who are women;

prestige: Pineo-Porter prestige score for occupation, from a social survey conducted in the mid-1960s;

census: canadian Census occupational code;

type: type of occupation (bc, Blue Collar; prof, Professional, Managerial, and Technical; wc, White Collar).

Let’s transform the income variable so to reduce the asimmetry.

data ("Prestige" )<- complete.cases (Prestige),- 5 ]$ income <- log (Prestige2$ income)

In order to fit the stan model, we need to pass some data: - y: the response vector;

X: the design matrix;

n: the sample size;

K: the number of covariates (including the intercept term);

a0, b0, beta0, s2_0: hyperparameters.

<- Prestige2$ prestige<- lm (prestige ~ ., data= Prestige2)<- model.matrix (mod)<- nrow (X)<- ncol (X)

We further create a list containing the objects to be passed to the stan model.

<- list (y = y,X = X,n = n,K = K,a0 = 10 ,b0 = 10 ,beta0 = rep (0 , K),s2_0 = rep (10 , K)

We can now use the rstan::sampling function to fit the model. We what two chains, each of length 10’000, and we want to discard the first 50% as warm-up.

<- 10000 <- 2 <- rstan:: sampling (object = LM_Model,data = data.stan,warmup = 0.5 * n.iter, iter = n.iter,thin= 1 , chains = nchain,refresh = n.iter/ 2 #, pars=c("beta", "sigma2")

SAMPLING FOR MODEL 'anon_model' NOW (CHAIN 1).

Chain 1:

Chain 1: Gradient evaluation took 6.1e-05 seconds

Chain 1: 1000 transitions using 10 leapfrog steps per transition would take 0.61 seconds.

Chain 1: Adjust your expectations accordingly!

Chain 1:

Chain 1:

Chain 1: Iteration: 1 / 10000 [ 0%] (Warmup)

Chain 1: Iteration: 5000 / 10000 [ 50%] (Warmup)

Chain 1: Iteration: 5001 / 10000 [ 50%] (Sampling)

Chain 1: Iteration: 10000 / 10000 [100%] (Sampling)

Chain 1:

Chain 1: Elapsed Time: 1.664 seconds (Warm-up)

Chain 1: 1.91 seconds (Sampling)

Chain 1: 3.574 seconds (Total)

Chain 1:

SAMPLING FOR MODEL 'anon_model' NOW (CHAIN 2).

Chain 2:

Chain 2: Gradient evaluation took 1.5e-05 seconds

Chain 2: 1000 transitions using 10 leapfrog steps per transition would take 0.15 seconds.

Chain 2: Adjust your expectations accordingly!

Chain 2:

Chain 2:

Chain 2: Iteration: 1 / 10000 [ 0%] (Warmup)

Chain 2: Iteration: 5000 / 10000 [ 50%] (Warmup)

Chain 2: Iteration: 5001 / 10000 [ 50%] (Sampling)

Chain 2: Iteration: 10000 / 10000 [100%] (Sampling)

Chain 2:

Chain 2: Elapsed Time: 2.027 seconds (Warm-up)

Chain 2: 1.96 seconds (Sampling)

Chain 2: 3.987 seconds (Total)

Chain 2:

<- summary (LM_stan, pars = c ("beta" , "sigma2" ))$ summaryrownames (summ)[1 : K] <- colnames (X)round (summ, 3 )

mean se_mean sd 2.5% 25% 50% 75% 97.5% n_eff

(Intercept) -2.995 0.039 3.125 -9.125 -5.107 -2.982 -0.931 3.285 6276.714

education 4.295 0.007 0.460 3.406 3.980 4.293 4.600 5.207 3890.714

income 0.523 0.009 0.618 -0.725 0.112 0.531 0.941 1.723 4272.666

women -0.060 0.000 0.025 -0.109 -0.076 -0.060 -0.044 -0.012 6066.112

typeprof 6.148 0.035 2.311 1.566 4.607 6.151 7.680 10.692 4390.481

typewc -2.734 0.024 1.787 -6.250 -3.945 -2.754 -1.527 0.799 5749.410

sigma2 48.767 0.079 6.610 37.473 44.095 48.247 52.804 63.099 6980.744

Rhat

(Intercept) 1

education 1

income 1

women 1

typeprof 1

typewc 1

sigma2 1

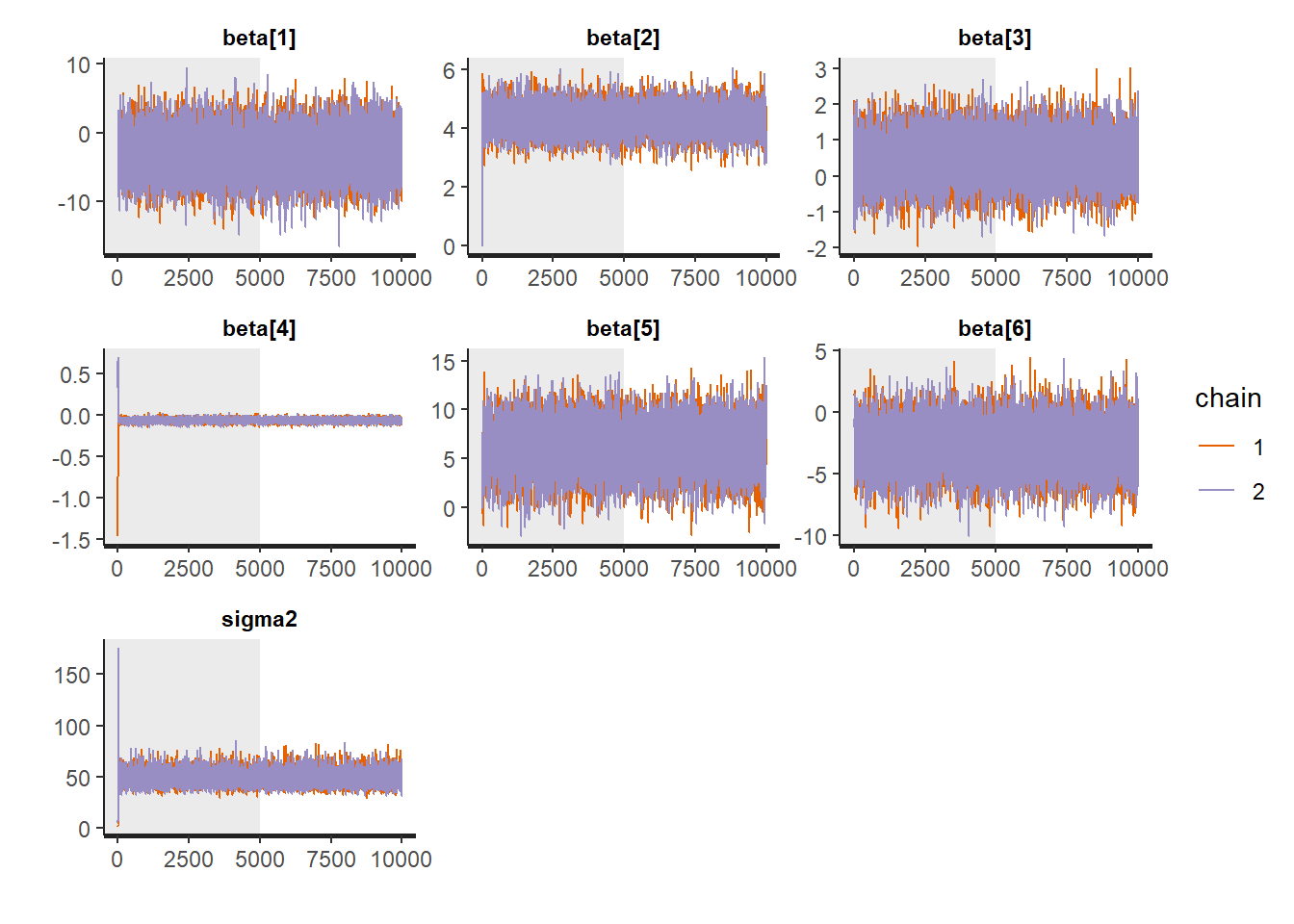

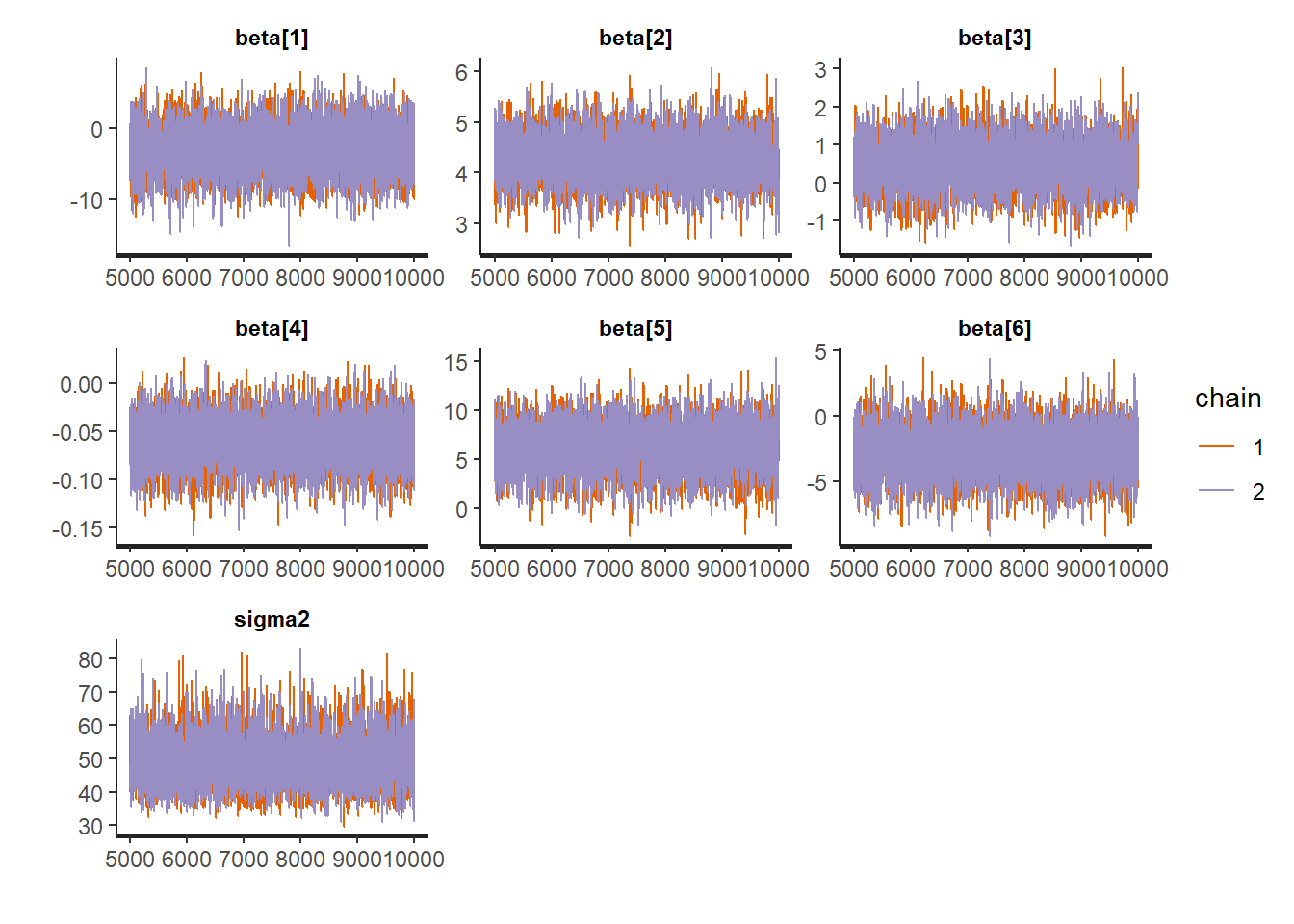

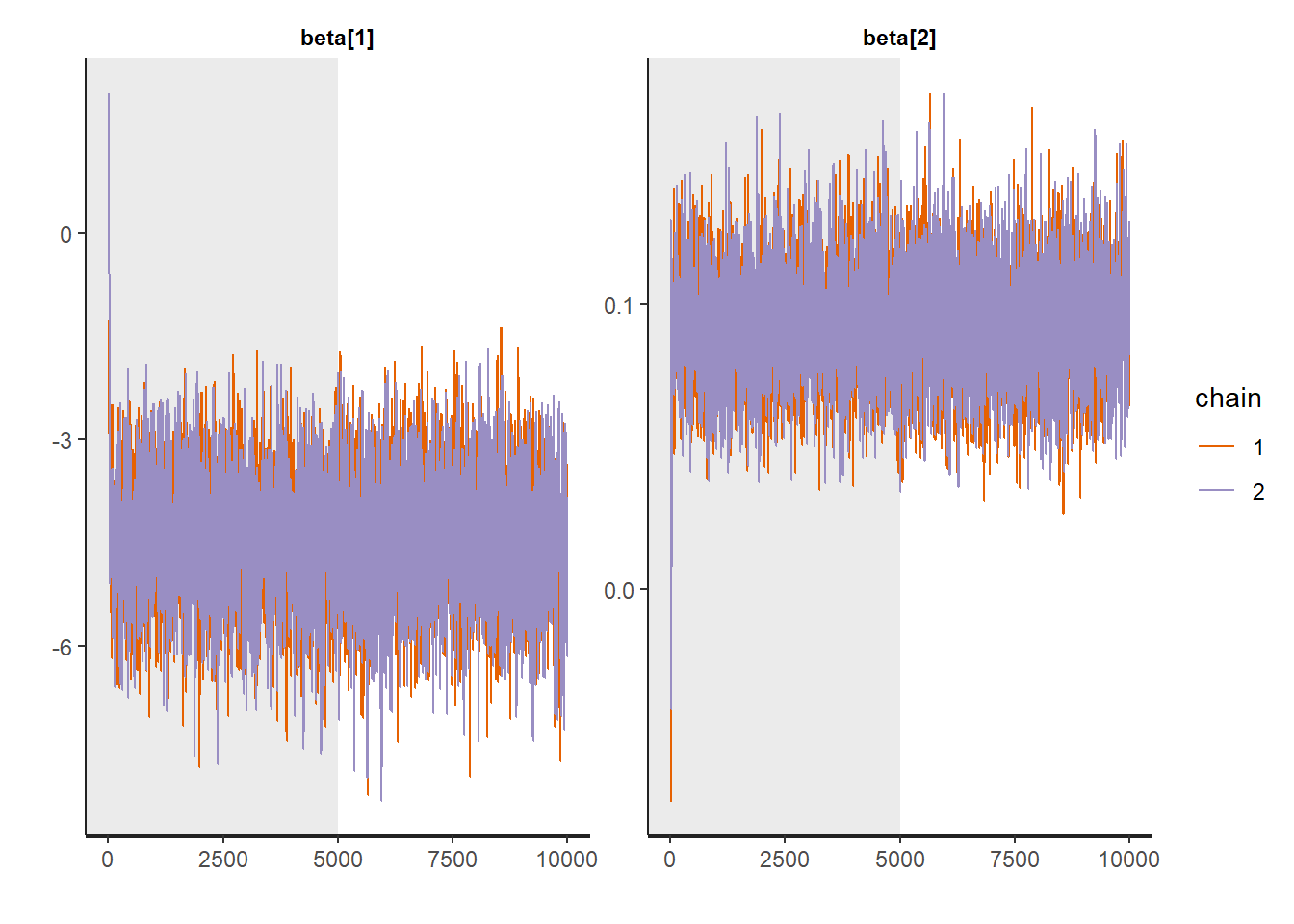

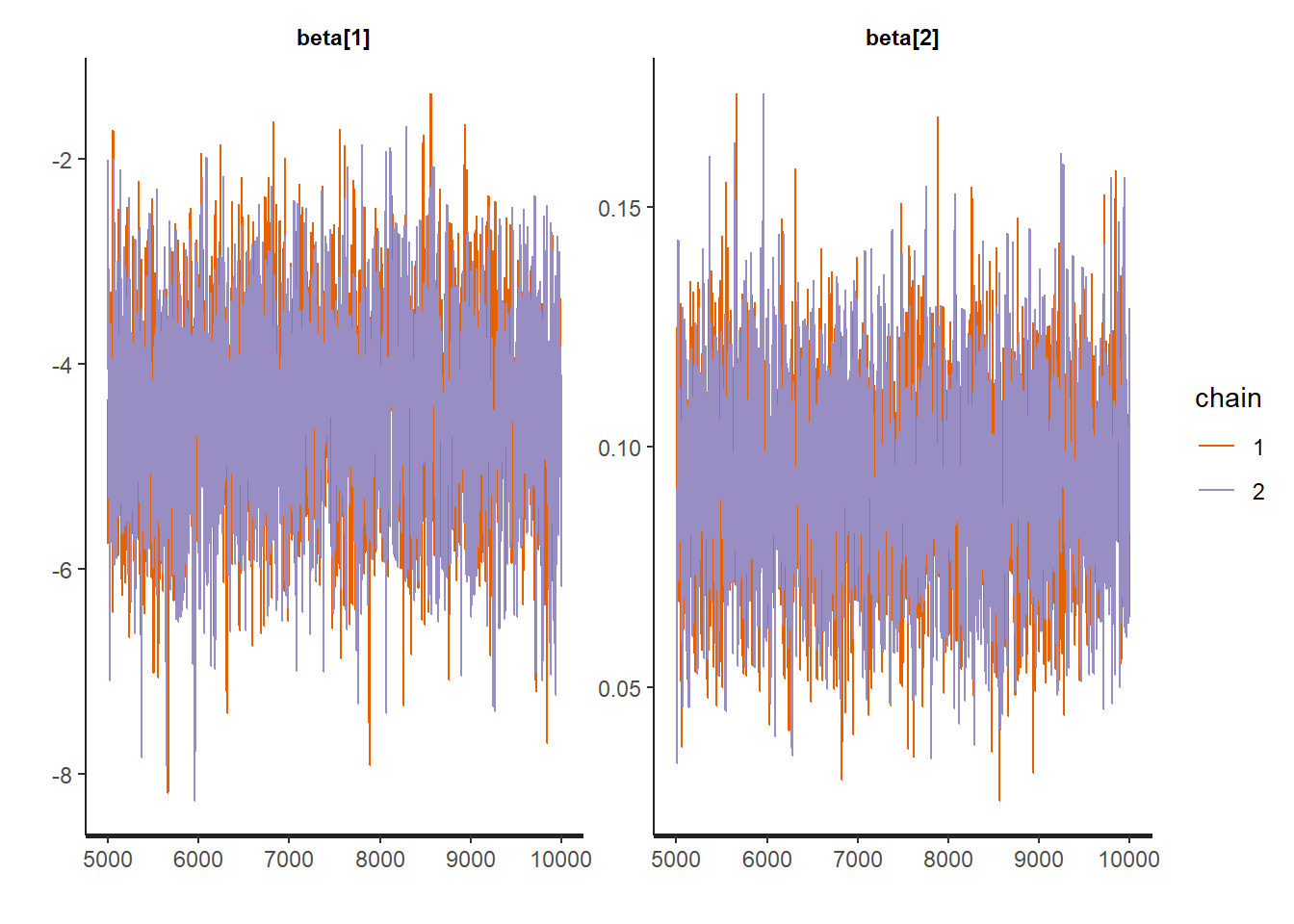

Traceplots:

:: traceplot (LM_stan, pars = c ('beta' , 'sigma2' ), inc_warmup = TRUE ):: traceplot (LM_stan, pars = c ('beta' , 'sigma2' ), inc_warmup = FALSE )

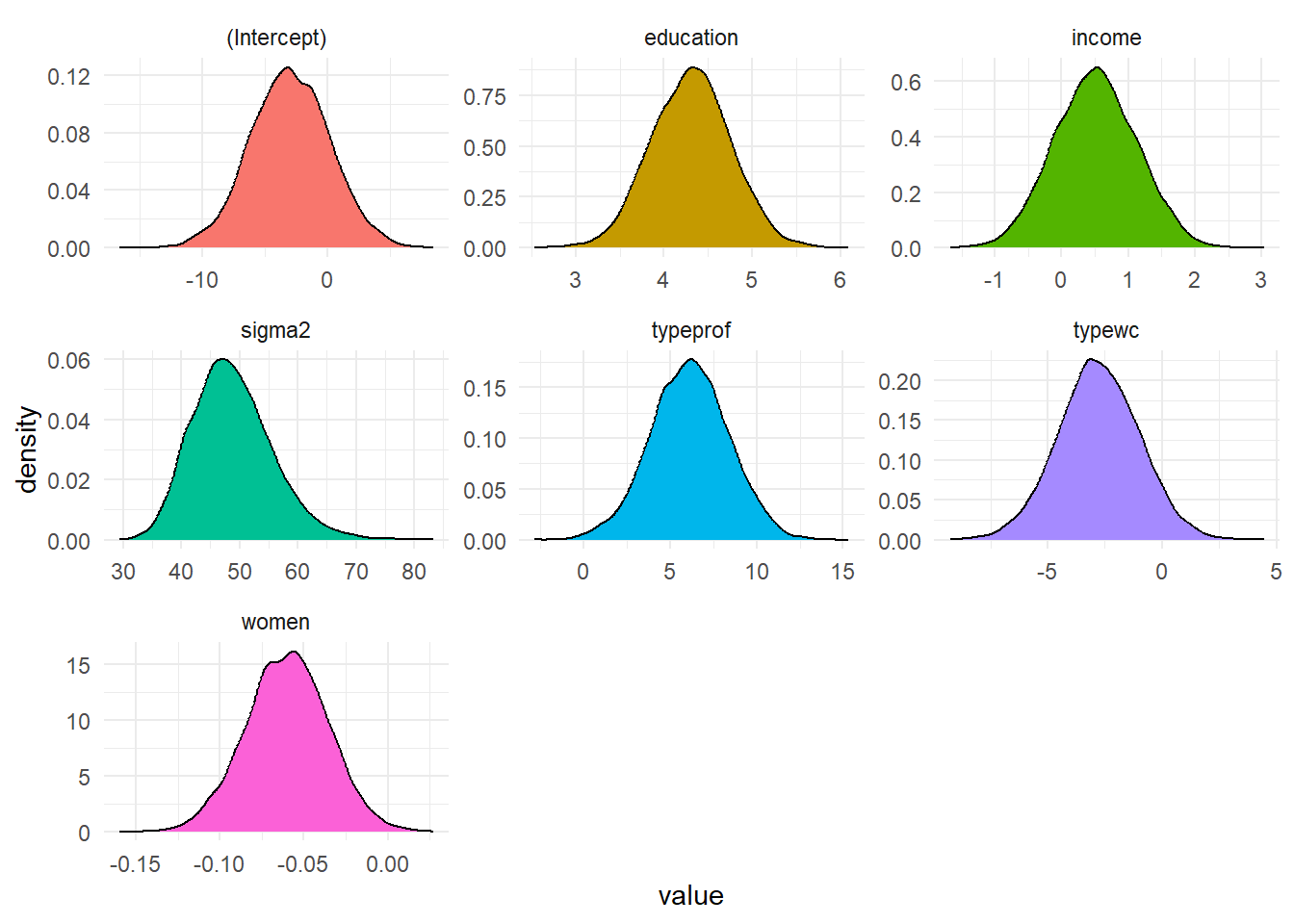

<- rstan:: extract (LM_stan, c ('beta' , 'sigma2' )) <- post_chain$ betacolnames (betas) <- colnames (X)<- post_chain$ sigma2<- data.frame (value = c (1 ], betas[,2 ], betas[,3 ],4 ], betas[,5 ], betas[,6 ],param = rep (c (colnames (X), 'sigma2' ), each = nrow (post_chain$ beta)))%>% ggplot (aes (value, fill = param)) + geom_density () + facet_wrap (~ param, scales = 'free' ) + theme_minimal () + theme (legend.position= "false" )

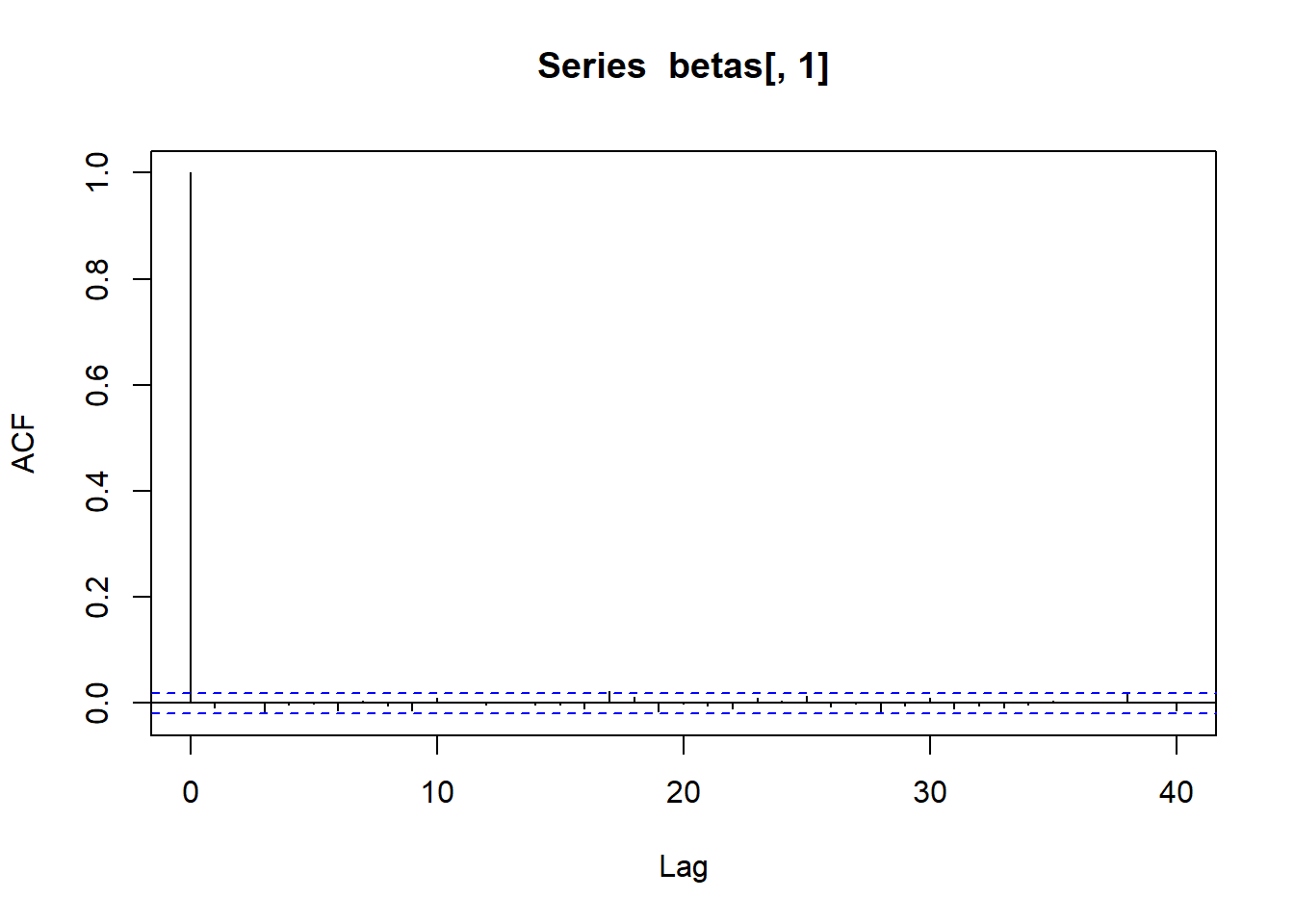

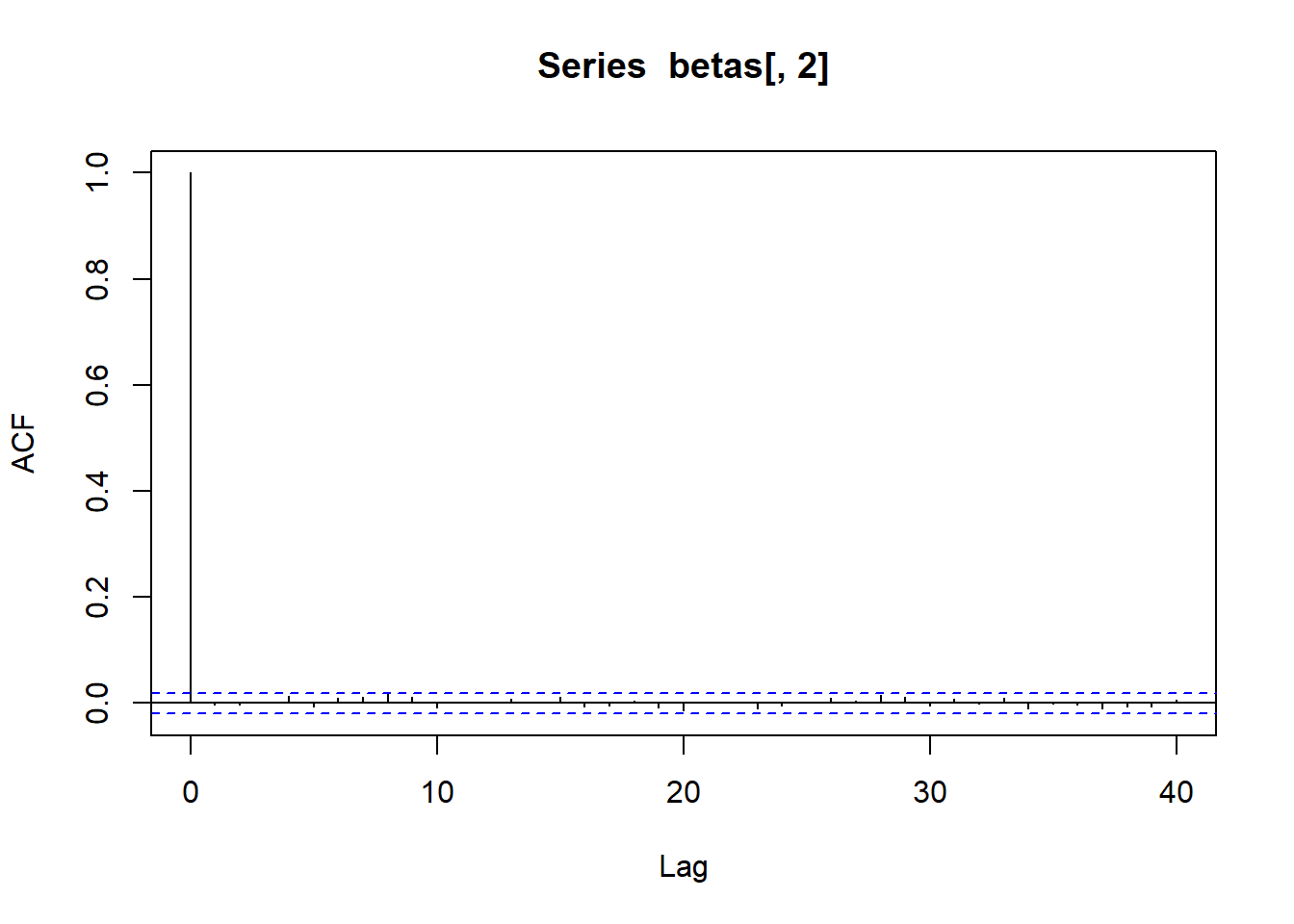

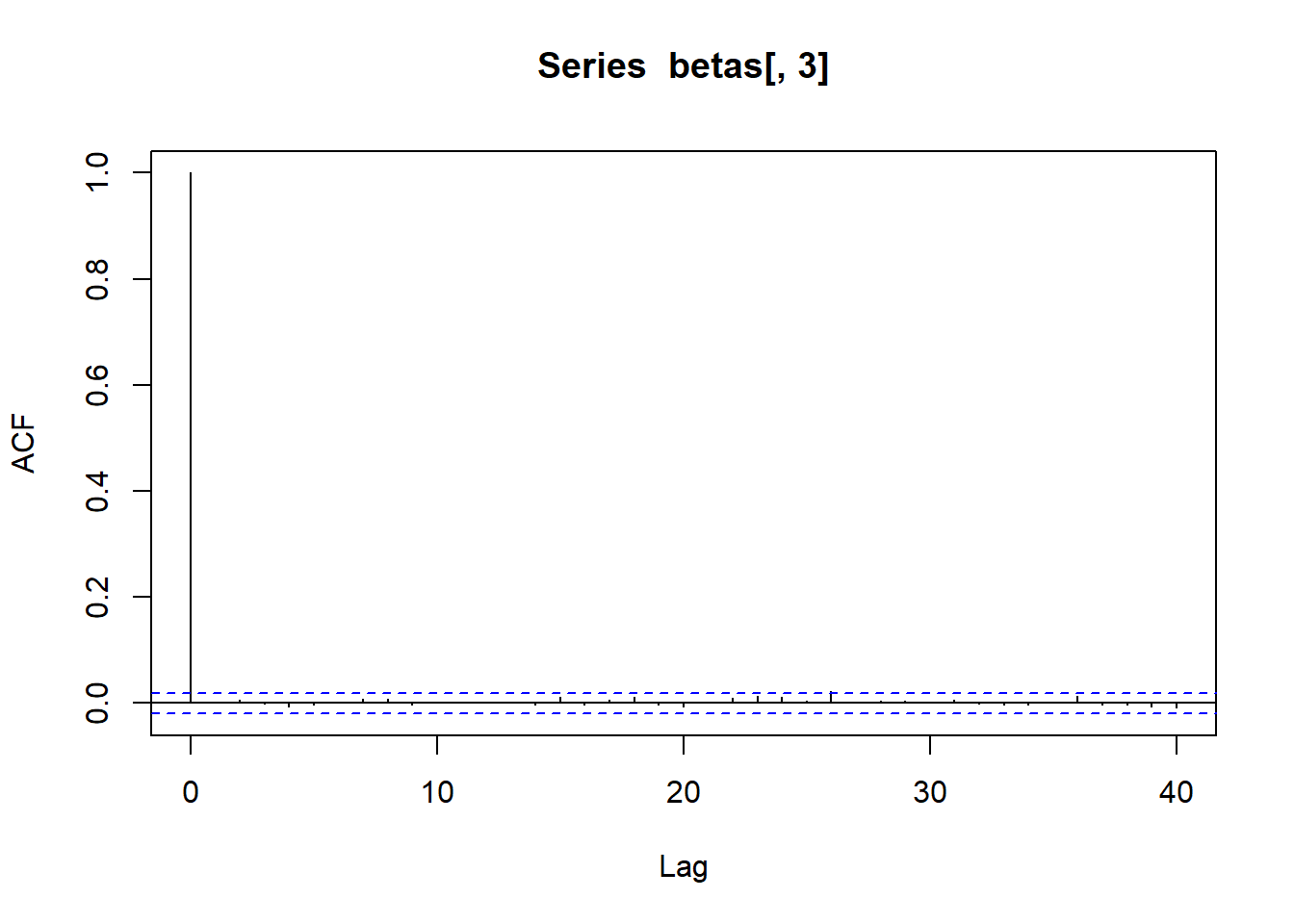

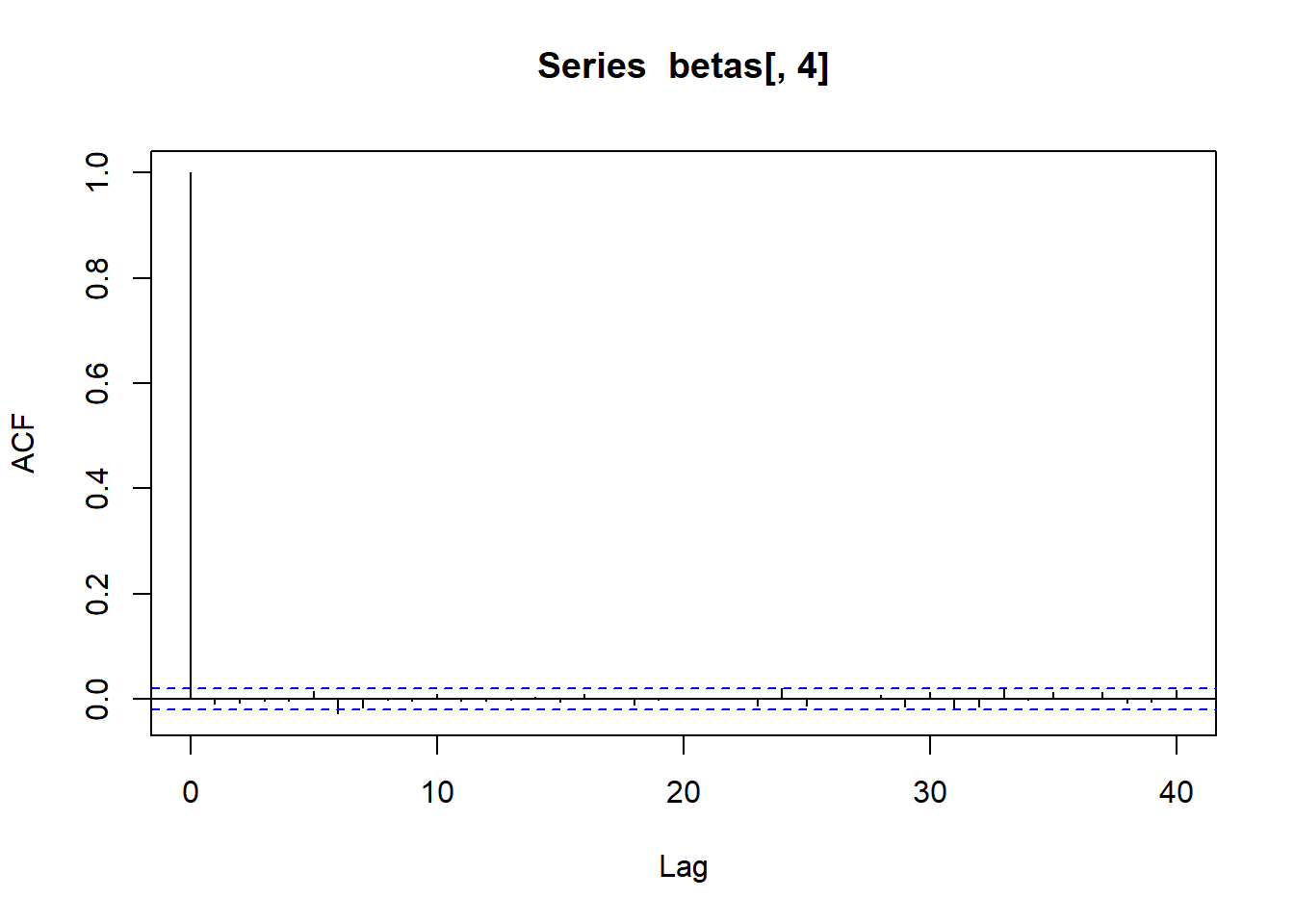

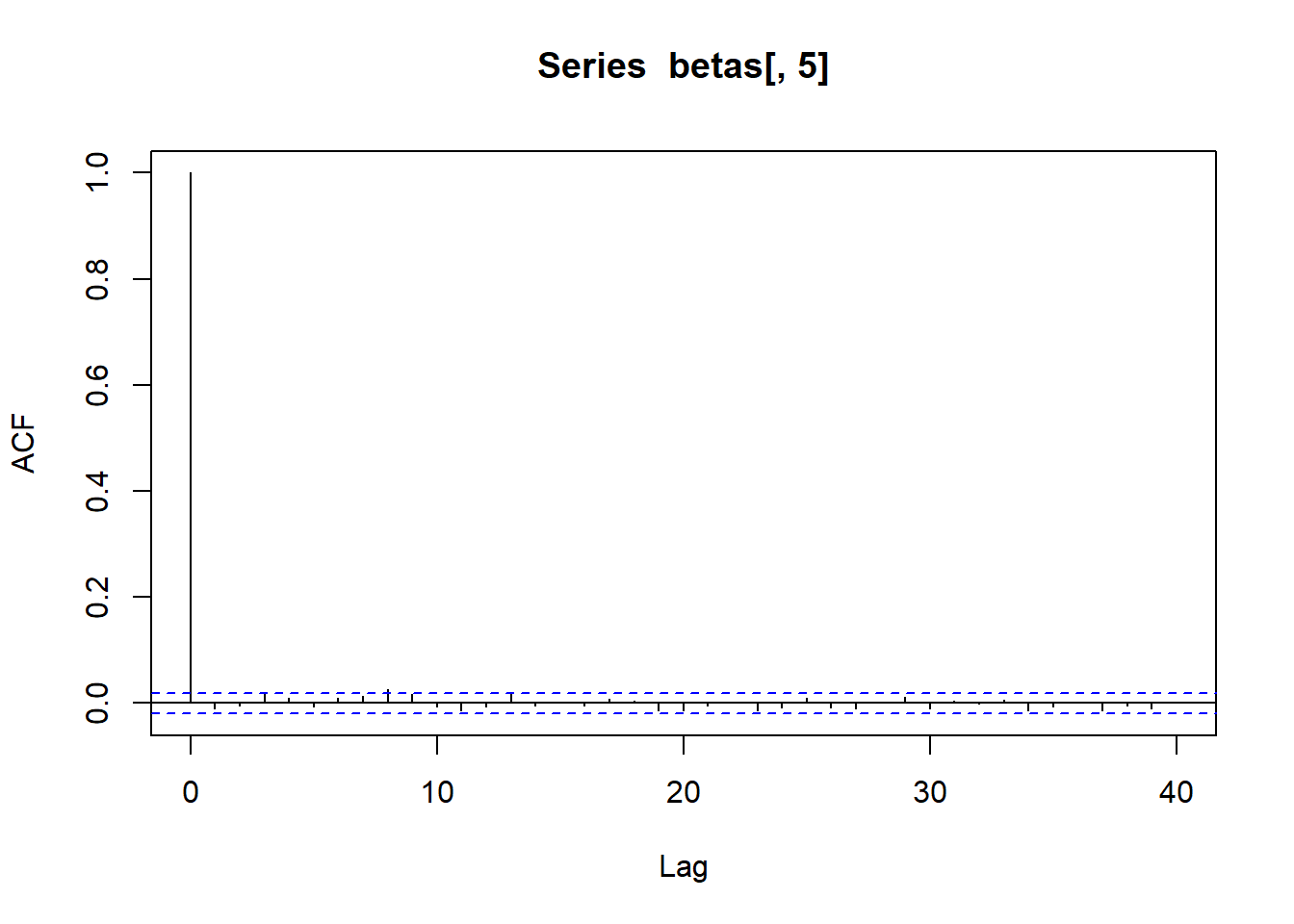

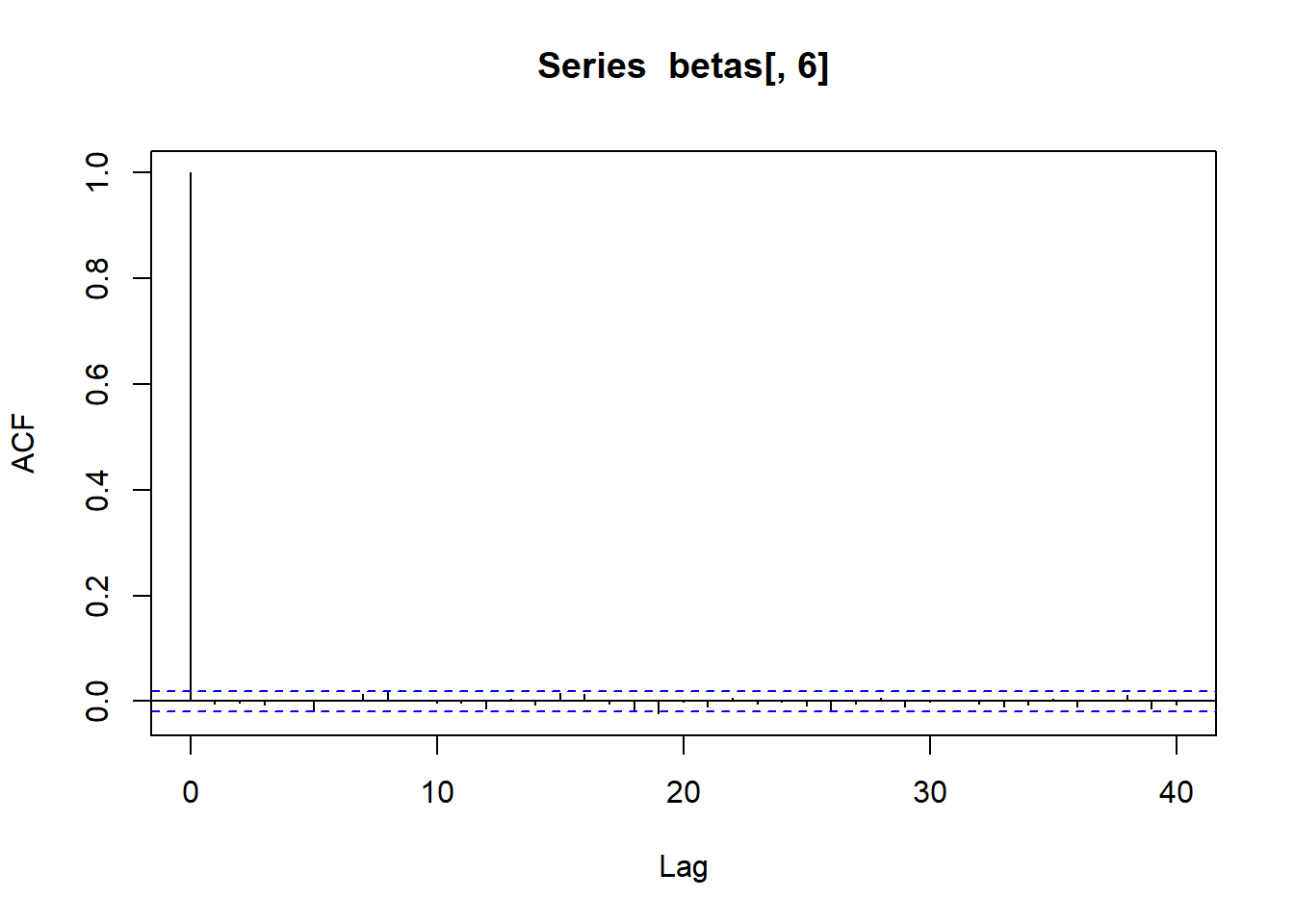

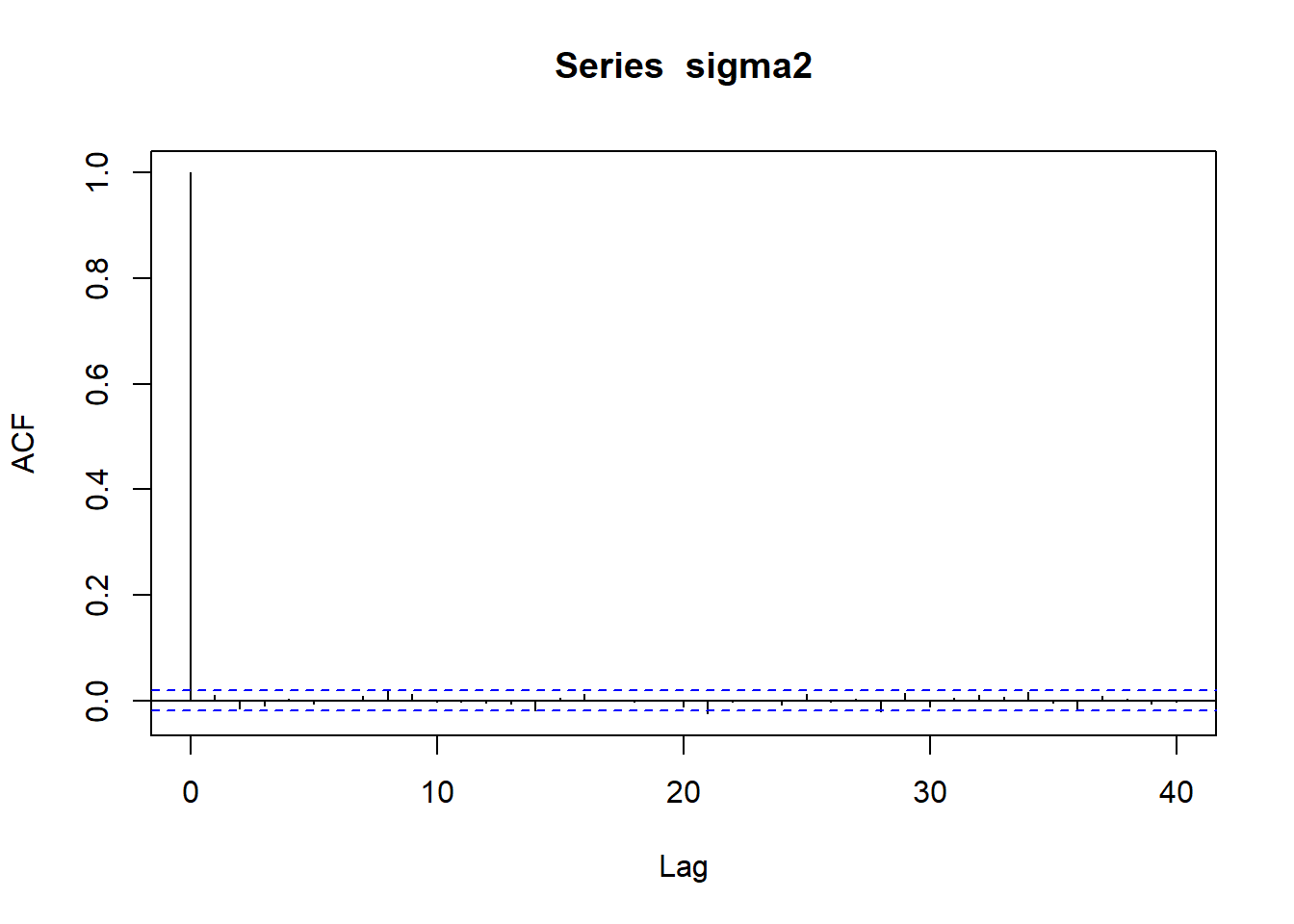

acf (betas[,5 ])acf (betas[,5 ])

As classical MCMCs, we can use the sampled values to approximate any quantity of interest. For example, we can approximate \(\mathbb{P}(\beta_1 > 4, \beta_3 > -0.5)\) :

mean (betas[,2 ] > 4 & betas[,4 ] > - .5 )

Logistic Regression

Let’s consider the logistic regression model to the cardiac dataset .

The Logistic_Model.stan model can be downloaded here .

rm (list = ls ())<- read.csv ("data/cardiac.csv" , header= T, sep= ";" )<- cardiac$ Chd<- model.matrix (lm (Chd ~ ., data= cardiac))<- nrow (X)<- ncol (X)

<- :: stan_model (file= "data/Logistic_Model.stan" )

S4 class stanmodel 'anon_model' coded as follows:

///////////////////////// DATA /////////////////////////////////////

data {

int<lower = 1> n; // number of data

int<lower = 1> K; // number of covariates (including the intercept)

int<lower = 0, upper = 1> y[n]; // response vector

matrix[n, K] X; // design matrix

vector[K] beta0;

vector[K] s2_0;

}

//////////////////// PARAMETERS /////////////////////////////////

parameters {

vector[K] beta; // regression coefficients

}

transformed parameters {

vector[n] eta;

vector<lower=0>[n] Odds;

vector<lower=0,upper=1>[n] p;

eta = X * beta;

for(i in 1:n){

Odds[i] = exp(eta[i]);

p[i] = Odds[i]/(1+Odds[i]);

}

}

////////////////// MODEL ////////////////////////

model {

// Prior

for (j in 1:K){

beta[j] ~ normal(beta0, sqrt(s2_0));

}

// Likelihood

for (s in 1:n){

y[s] ~ bernoulli(p[s]);

}

}

<- list (y = y,X = X,n = n,K = K,beta0 = rep (0 , K),s2_0 = rep (10 , K)

<- 10000 <- 2 <- rstan:: sampling (object = Logistic_Model,data = data.stan_logis,warmup = 0.5 * n.iter, iter = n.iter,thin = 1 , chains = nchain,refresh = n.iter/ 2

SAMPLING FOR MODEL 'anon_model' NOW (CHAIN 1).

Chain 1:

Chain 1: Gradient evaluation took 7.3e-05 seconds

Chain 1: 1000 transitions using 10 leapfrog steps per transition would take 0.73 seconds.

Chain 1: Adjust your expectations accordingly!

Chain 1:

Chain 1:

Chain 1: Iteration: 1 / 10000 [ 0%] (Warmup)

Chain 1: Iteration: 5000 / 10000 [ 50%] (Warmup)

Chain 1: Iteration: 5001 / 10000 [ 50%] (Sampling)

Chain 1: Iteration: 10000 / 10000 [100%] (Sampling)

Chain 1:

Chain 1: Elapsed Time: 2.063 seconds (Warm-up)

Chain 1: 1.669 seconds (Sampling)

Chain 1: 3.732 seconds (Total)

Chain 1:

SAMPLING FOR MODEL 'anon_model' NOW (CHAIN 2).

Chain 2:

Chain 2: Gradient evaluation took 2.5e-05 seconds

Chain 2: 1000 transitions using 10 leapfrog steps per transition would take 0.25 seconds.

Chain 2: Adjust your expectations accordingly!

Chain 2:

Chain 2:

Chain 2: Iteration: 1 / 10000 [ 0%] (Warmup)

Chain 2: Iteration: 5000 / 10000 [ 50%] (Warmup)

Chain 2: Iteration: 5001 / 10000 [ 50%] (Sampling)

Chain 2: Iteration: 10000 / 10000 [100%] (Sampling)

Chain 2:

Chain 2: Elapsed Time: 2.067 seconds (Warm-up)

Chain 2: 1.563 seconds (Sampling)

Chain 2: 3.63 seconds (Total)

Chain 2:

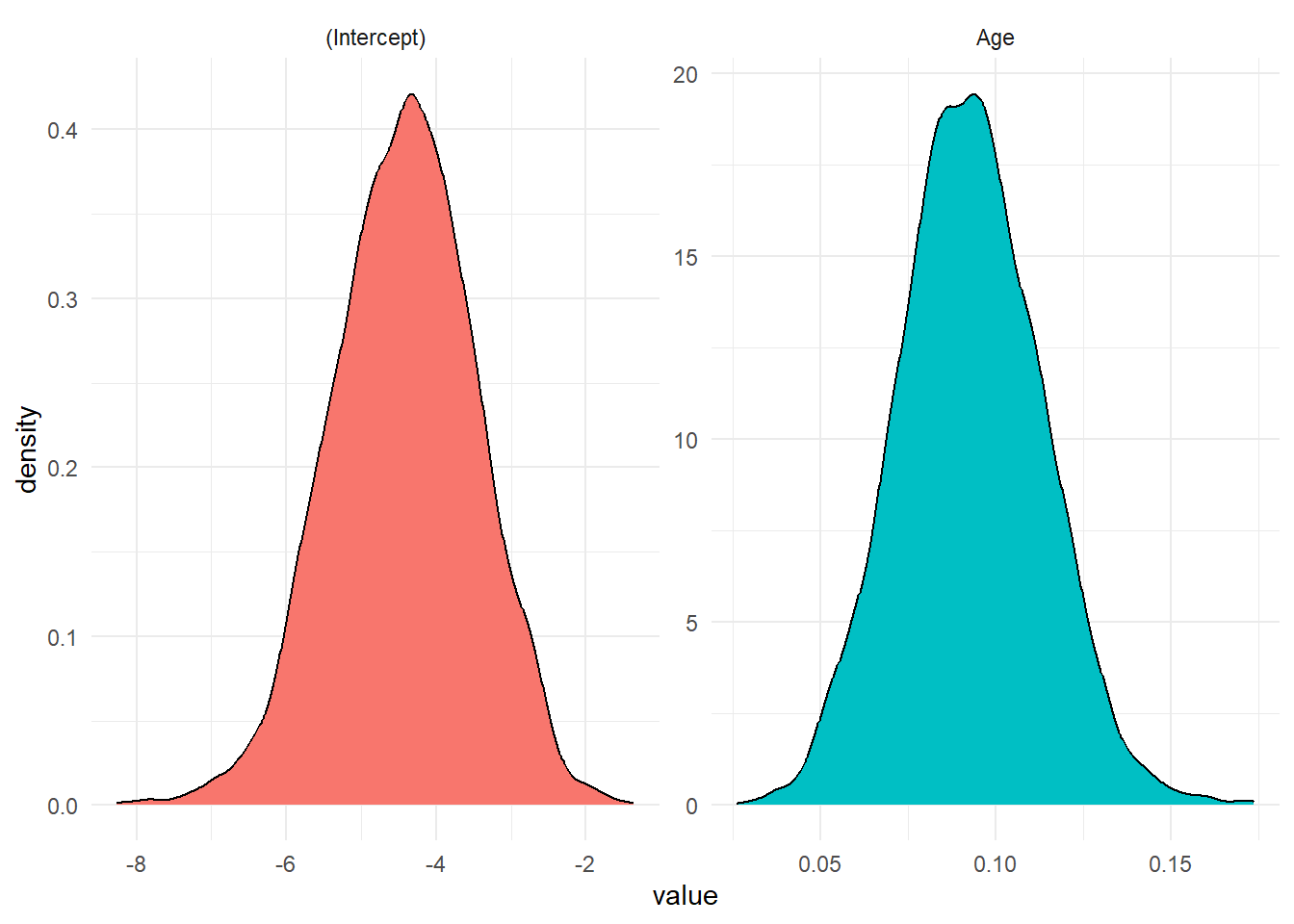

<- summary (Logistic_stan, pars = "beta" )$ summaryrownames (summ)[1 : K] <- colnames (X)round (summ, 3 )

mean se_mean sd 2.5% 25% 50% 75% 97.5% n_eff

(Intercept) -4.380 0.021 0.925 -6.234 -4.999 -4.353 -3.746 -2.637 1982.076

Age 0.092 0.000 0.020 0.054 0.078 0.091 0.105 0.131 1977.922

Rhat

(Intercept) 1.002

Age 1.002

:: traceplot (Logistic_stan, pars = "beta" , inc_warmup = TRUE ):: traceplot (Logistic_stan, pars = "beta" , inc_warmup = FALSE )

<- rstan:: extract (Logistic_stan, "beta" ) <- post_chain$ betacolnames (betas) <- colnames (X)<- data.frame (value = c (betas[,1 ], betas[,2 ]), param = rep (colnames (X), each = nrow (post_chain$ beta)))%>% ggplot (aes (value, fill = param)) + geom_density () + facet_wrap (~ param, scales = 'free' ) + theme_minimal () + theme (legend.position= "false" )

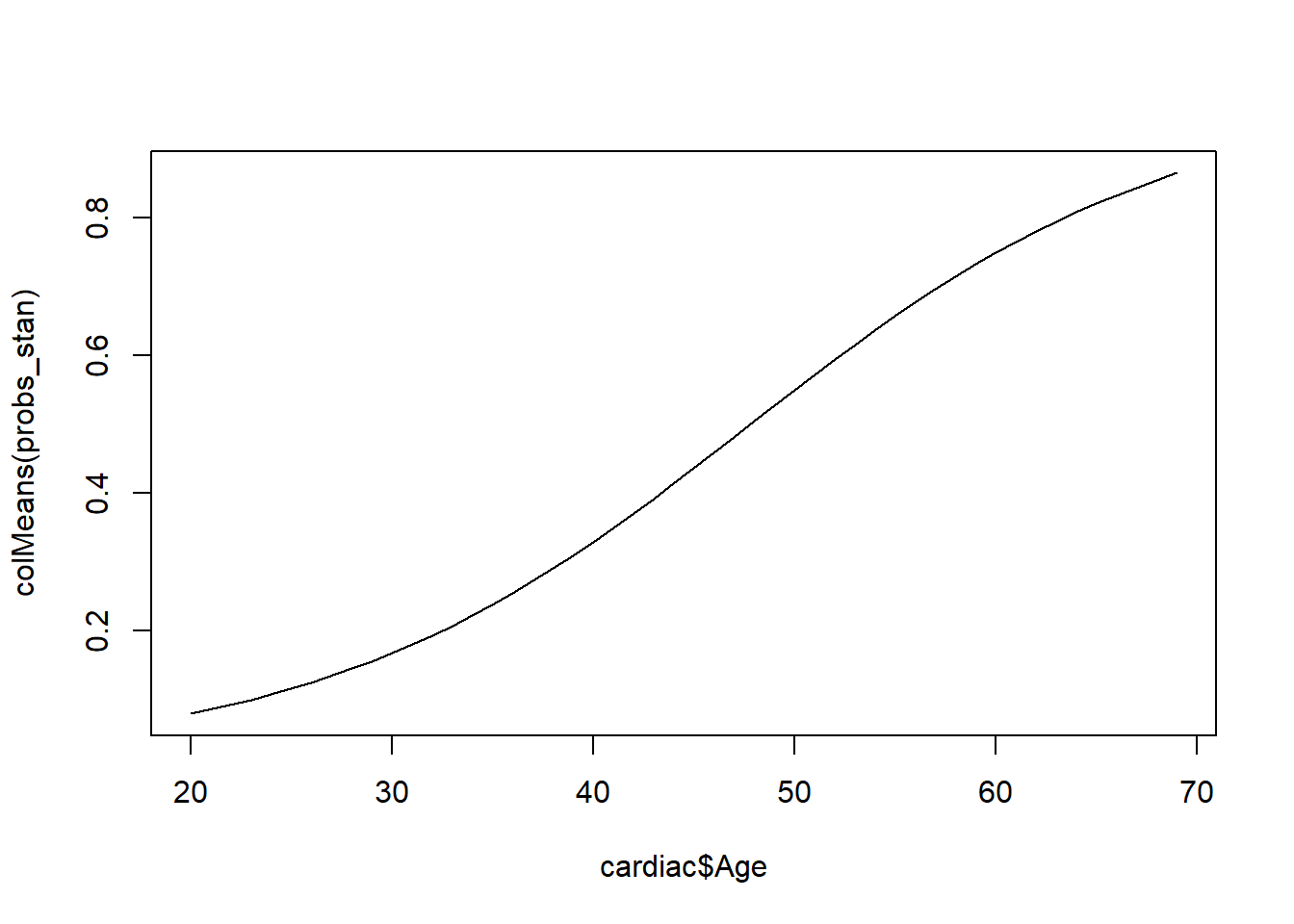

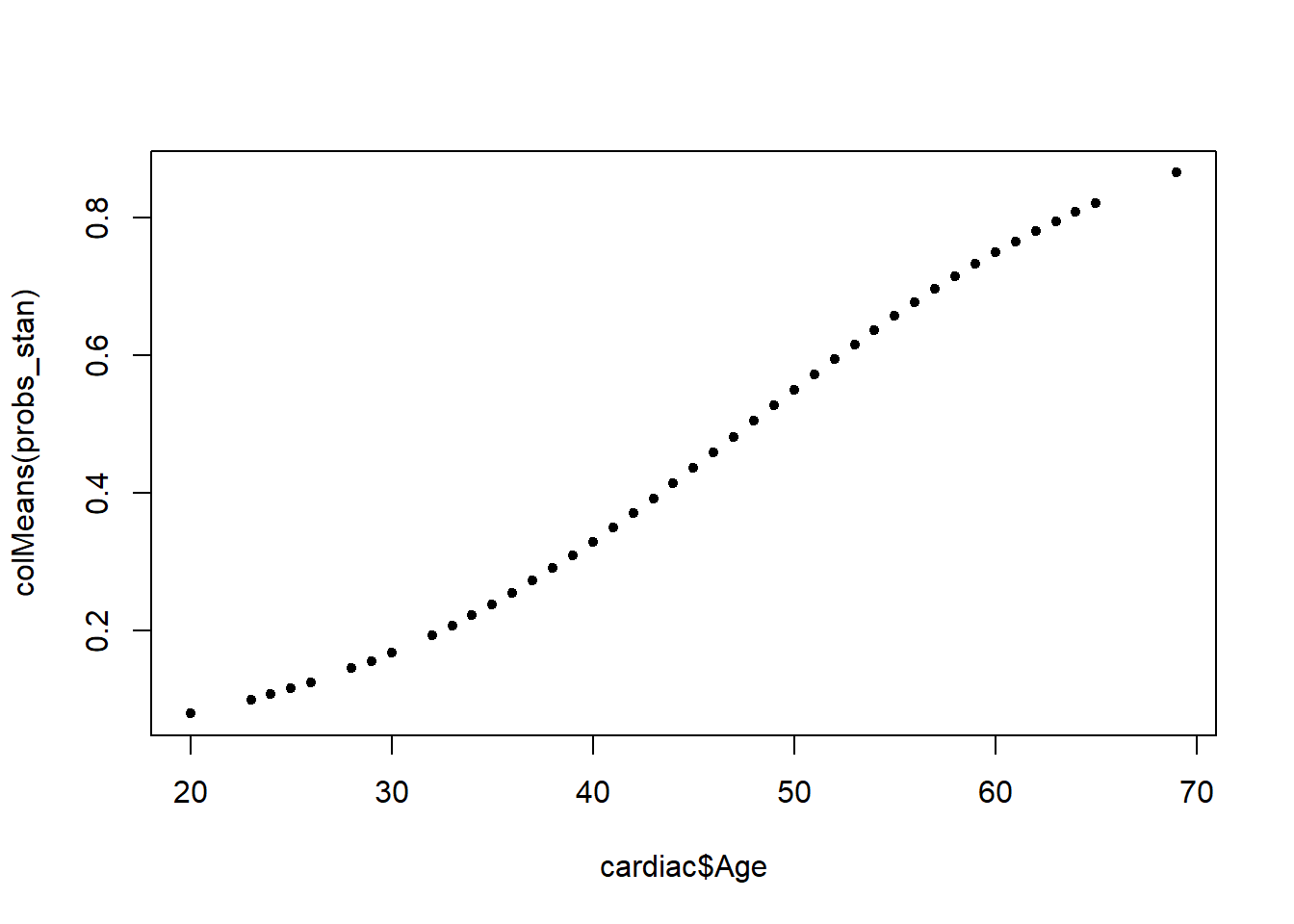

We can evaluate probabilities for each patients.

<- rstan:: extract (Logistic_stan, "p" )$ pplot (cardiac$ Age, colMeans (probs_stan), pch= 20 , type= "l" )plot (cardiac$ Age, colMeans (probs_stan), pch= 20 )